10. MediaPipe development

mediapipe github: https://github.com/google/mediapipe

mediapipe official website: https://google.github.io/mediapipe/

dlib official website: http://dlib.net/

dlib github: https://github.com/davisking/dlib

10.1. Introduction

MediaPipe is an open source data stream processing machine learning application development framework developed by Google. It is a graph-based data processing pipeline for building data sources using many forms, such as video, audio, sensor data, and any time series data. MediaPipe is cross-platform and can run on embedded platforms (Raspberry Pi, etc.), mobile devices (iOS and Android), workstations and servers, and supports mobile GPU acceleration. MediaPipe provides cross-platform, customizable ML solutions for live and streaming media.

The core framework of MediaPipe is implemented in C++ and provides support for languages such as Java and Objective C. The main concepts of MediaPipe include packet (Packet), data stream (Stream), calculation unit (Calculator), graph (Graph) and subgraph (Subgraph).

Features of MediaPipe:

- End-to-end acceleration: Built-in fast ML inference and processing accelerates even on commodity hardware.

- Build once, deploy anywhere: Unified solution for Android, iOS, desktop/cloud, web and IoT.

- Ready-to-use solutions: cutting-edge ML solutions that showcase the full capabilities of the framework.

- Free and open source: frameworks and solutions under Apache2.0, fully extensible and customizable.

Deep Learning Solutions in MediaPipe

| Face Detection | Face Mesh | Iris | Hands | Pose | Holistic |

|---|---|---|---|---|---|

|  |  |  |  |  |

| Hair Segmentation | Object Detection | Box Tracking | Instant Motion Tracking | Objectron | KNIFT |

|  |  |  |  |  |

| Android | iOS | C++ | Python | JS | Coral | |

|---|---|---|---|---|---|---|

| Face Detection | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| Face Mesh | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Iris | ✅ | ✅ | ✅ | |||

| Hands | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Pose | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Holistic | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Selfie Segmentation | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Hair Segmentation | ✅ | ✅ | ||||

| Object Detection | ✅ | ✅ | ✅ | ✅ | ||

| Box Tracking | ✅ | ✅ | ✅ | |||

| Instant Motion Tracking | ✅ | |||||

| Objectron | ✅ | ✅ | ✅ | ✅ | ||

| KNIFT | ✅ | |||||

| AutoFlip | ✅ | |||||

| MediaSequence | ✅ | |||||

| YouTube 8M | ✅ |

10.2. Use

jetson motherboard/Raspberry Pi 4B

---------------------------------- ROS ------------------ ----------------------------------roslaunch transbot_mediapipe cloud_Viewer.launch # Point cloud viewing: supports 01~04roslaunch transbot_mediapipe 01_HandDetector.launch # Hand detectionroslaunch transbot_mediapipe 02_PoseDetector.launch # Pose detectionroslaunch transbot_mediapipe 03_Holistic.launch # Overall detectionroslaunch transbot_mediapipe 04_FaceMesh.launch # Face detectionroslaunch transbot_mediapipe 05_FaceEyeDetection.launch # Face recognition---------------------------------- not ROS --------------- --------------------------cd ~/transbot_ws/src/transbot_mediapipe/scripts # Enter the directory where the source code is locatedpython3 06_FaceLandmarks.py # Face special effectspython3.7 07_FaceDetection.py # Face detectionpython3.7 08_Objectron.py # Three-dimensional object recognitionpython3.7 09_VirtualPaint.py # Brushpython3.7 10_HandCtrl.py # Finger controlpython3.7 11_GestureRecognition.py # Gesture recognitionpython3.7 12_PalmTracker.py # The car and the robotic arm follow the palmRaspberry Pi 5

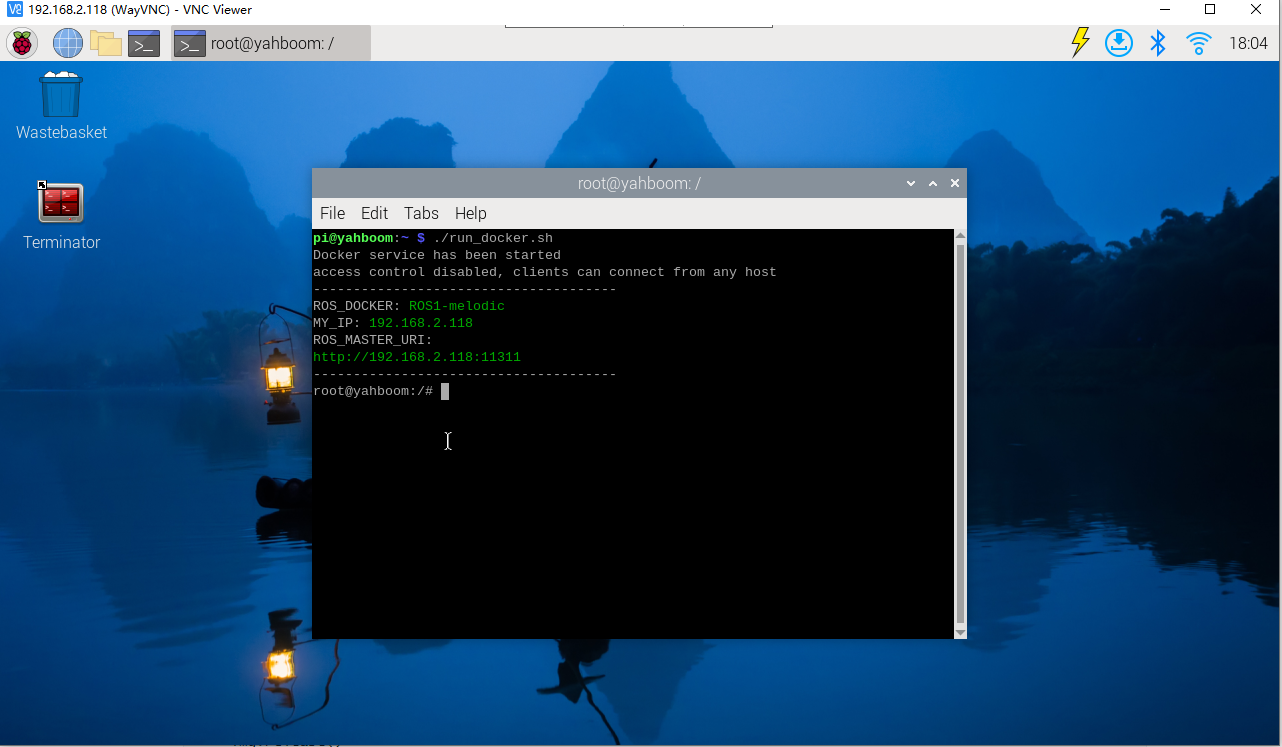

Before running, please confirm that the large program has been permanently closed

Enter docker

Note: If there is a terminal that automatically starts docker, or there is a docker terminal that has been opened, you can directly enter the docker terminal to run the command, and there is no need to manually start docker

Start docker manually

xxxxxxxxxx./run_docker.sh

xxxxxxxxxx---------------------------------- ROS --------------- ----------------------------------roslaunch transbot_mediapipe cloud_Viewer.launch # Point cloud viewing: supports 01~04roslaunch transbot_mediapipe 01_HandDetector.launch # Hand detectionroslaunch transbot_mediapipe 02_PoseDetector.launch # Pose detectionroslaunch transbot_mediapipe 03_Holistic.launch # Overall detectionroslaunch transbot_mediapipe 04_FaceMesh.launch # Face detectionroslaunch transbot_mediapipe 05_FaceEyeDetection.launch # Face recognition---------------------------------- not ROS --------------- --------------------------cd ~/transbot_ws/src/transbot_mediapipe/scripts # Enter the directory where the source code is locatedpython3 06_FaceLandmarks.py # Face special effectspython3.8 07_FaceDetection.py # Face detectionpython3.8 08_Objectron.py # Three-dimensional object recognitionpython3.8 09_VirtualPaint.py # Brushpython3.8 10_HandCtrl.py # Finger controlpython3.8 11_GestureRecognition.py # Gesture recognitionpython3.8 12_PalmTracker.py # The car and the robotic arm follow the palmDuring use, you need to pay attention to the following:

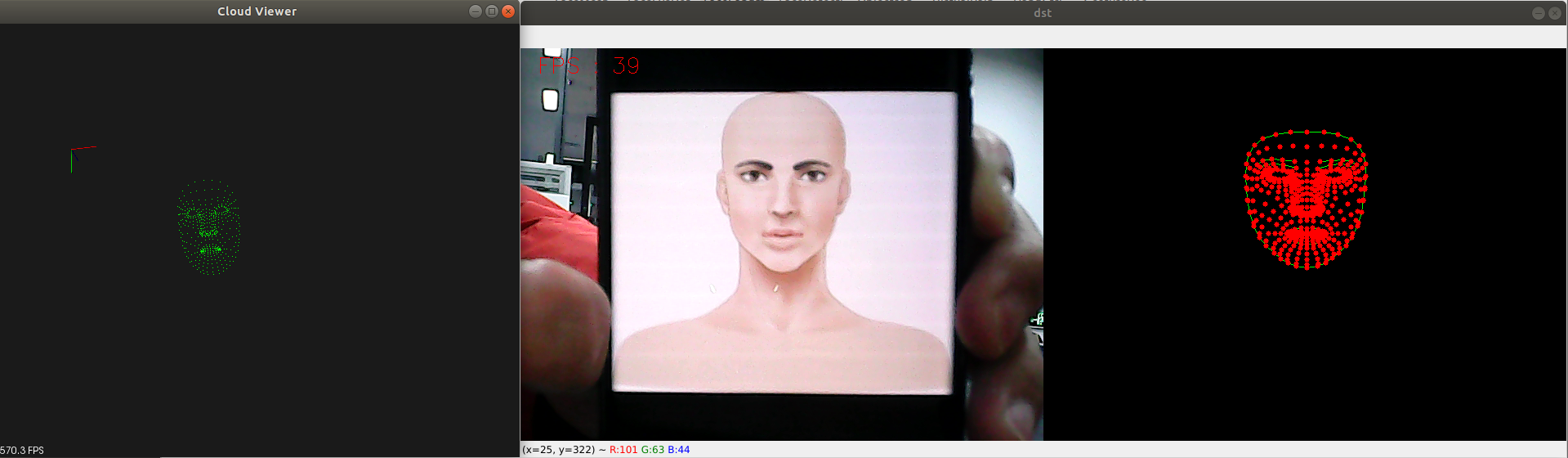

- Hand detection, posture detection, overall detection, and face detection all have point cloud viewing functions, taking face detection as an example.

- All functions [q key] are for exit.

- Overall detection: including hand, face, and body posture detection.

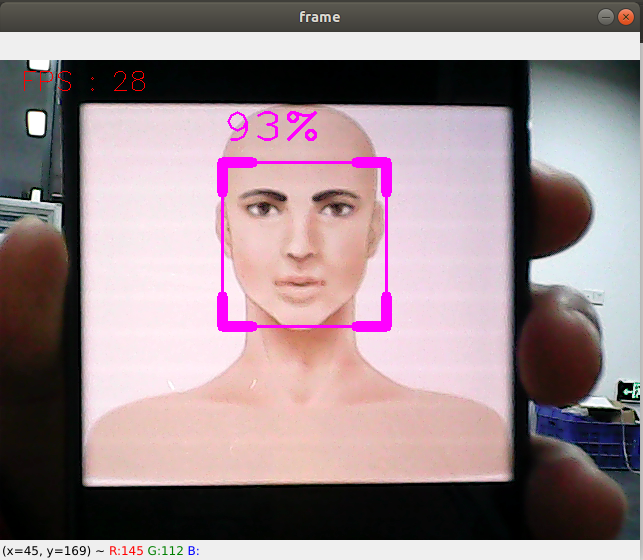

- Three-dimensional object recognition: The identifiable objects are: ['Shoe', 'Chair', 'Cup', 'Camera'], a total of 4 categories; click the [f key] to switch to recognized objects; the jetson series cannot use keyboard keys to switch To identify objects, you need to change the [self.index] parameter in the source code.

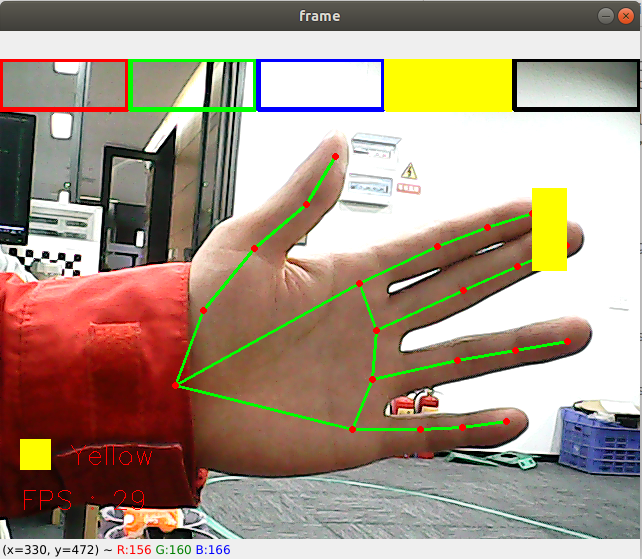

- Brush: When the index finger and middle finger of the right hand are combined, it is in the selection state, and a color selection box pops up at the same time. When the two fingertips move to the corresponding color position, the color is selected (black is the eraser); when the index finger and middle finger are separated, it is in the drawing state, and you can Draw anything on the drawing board.

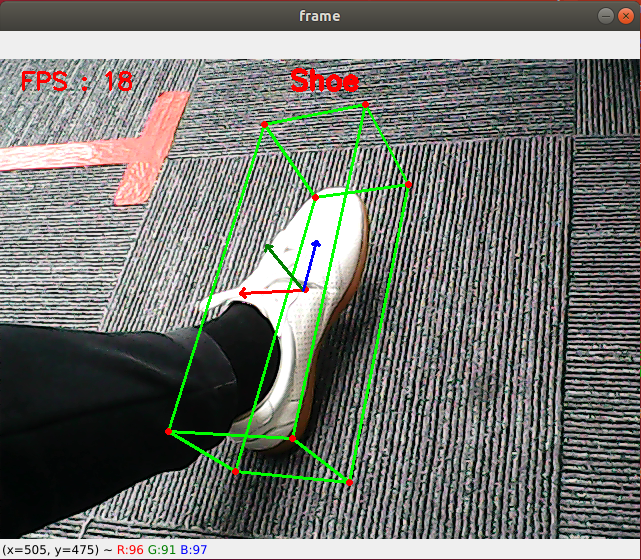

- Finger control: Click [f key] to switch the recognition effect.

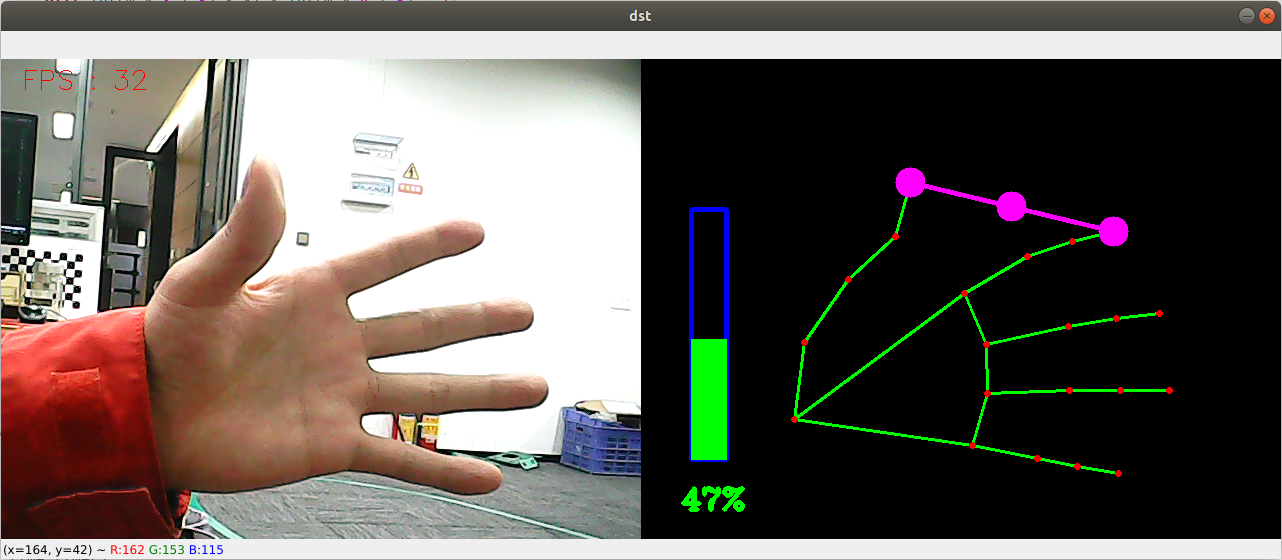

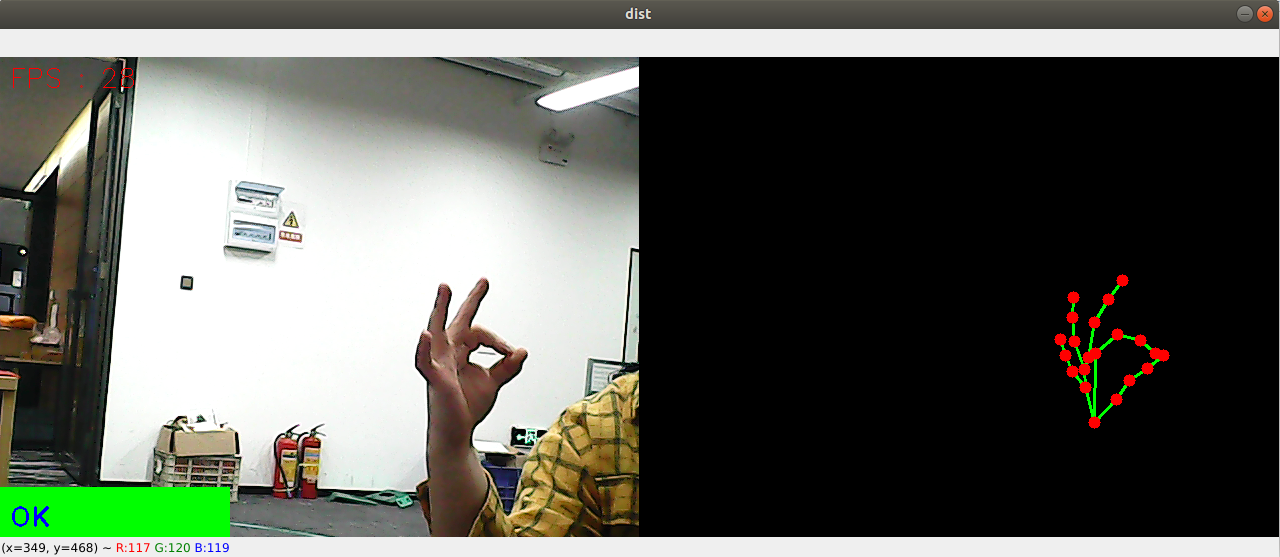

- Finger recognition: Gesture recognition designed with the right hand in mind, can be accurately recognized when certain conditions are met. The recognized gestures are: [Zero, One, Two, Three, Four, Five, Six, Seven, Eight, Ok, Rock, Thumb_up (like), Thumb_down (thumb down), Heart_single (one-hand heart comparison)] , 14 categories in total.

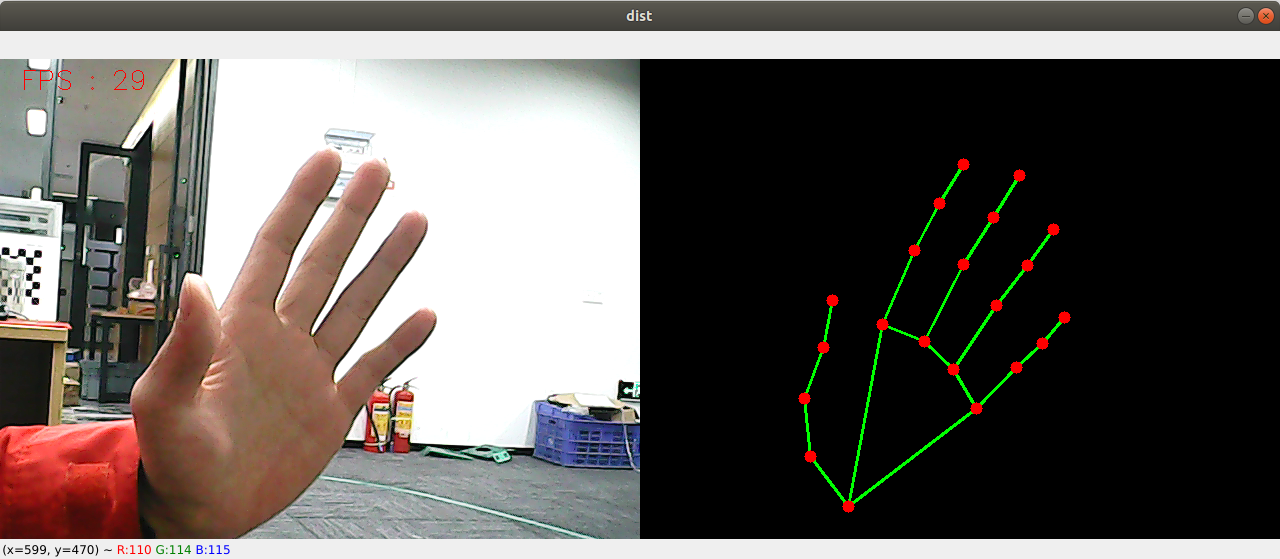

| 01. Hand detection |  |

|---|---|

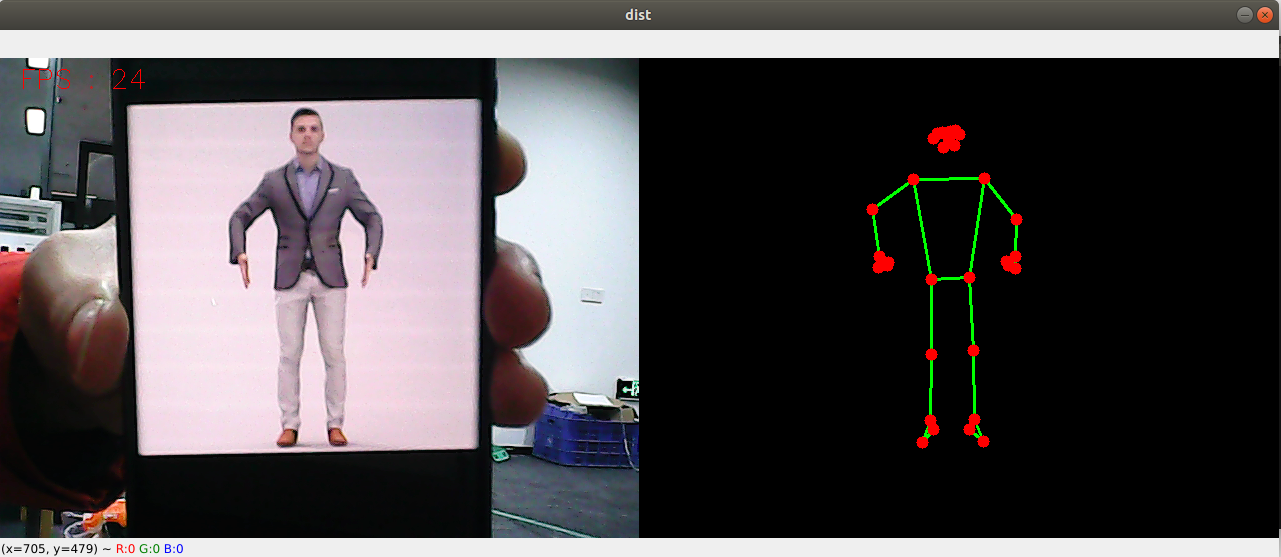

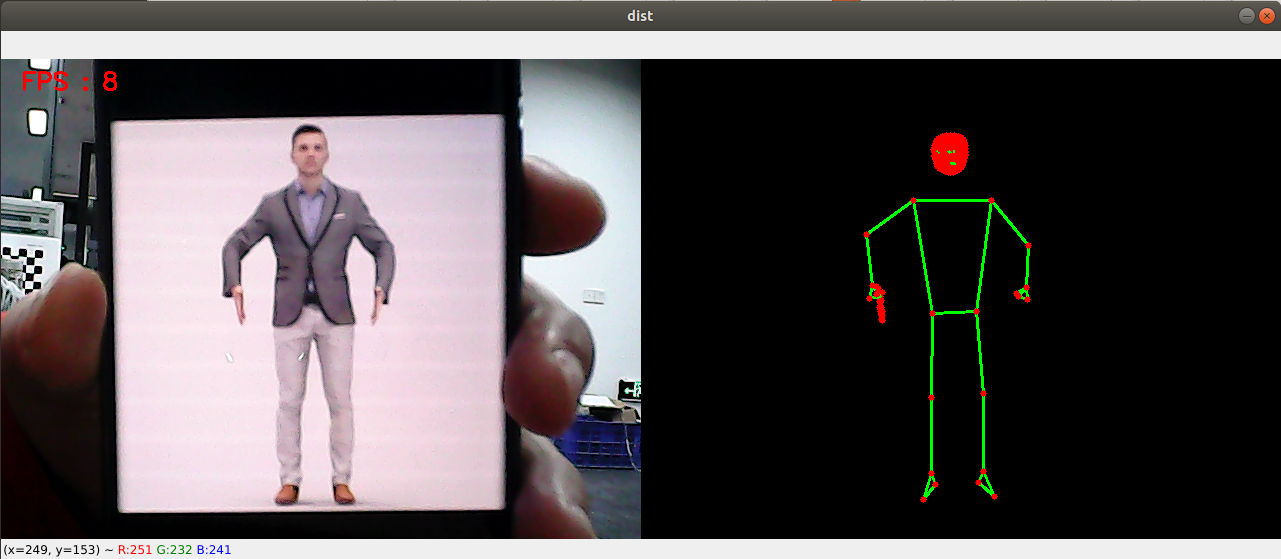

| 02. Attitude detection |  |

| 03. Overall inspection |  |

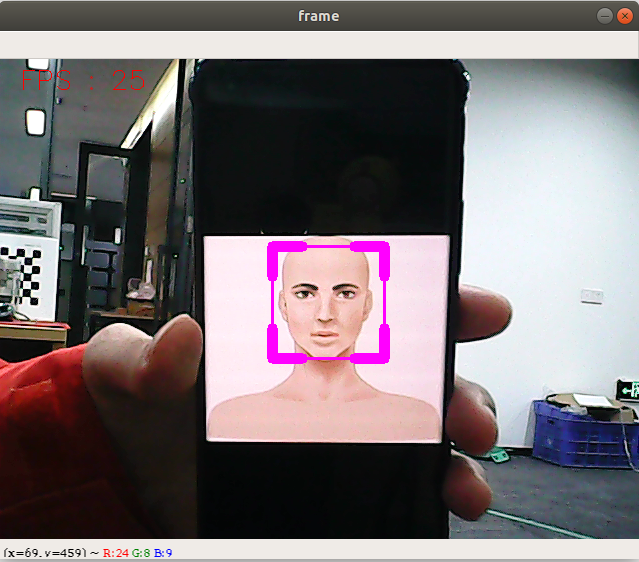

| 04. Face detection |  |

| :--------------: | --------------------------- |

|

| :--------------: | --------------------------- |

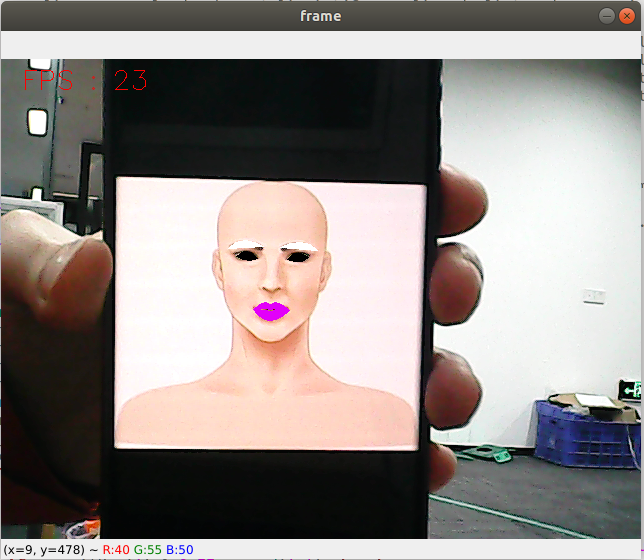

| 05. Face recognition | 06. Face special effects | 07. Face detection |

|---|---|---|

/> /> |  |  |

| 08. Three-dimensional object recognition | 09, Paintbrush | |

|  |

| 10. Finger control |  |

|---|---|

| 11. Gesture recognition |  |

| 12. Palm follow (Note: If the robot arm blocks the camera, you can press the [r] key to reset the robot arm.) |

10.3, MediaPipe Hands

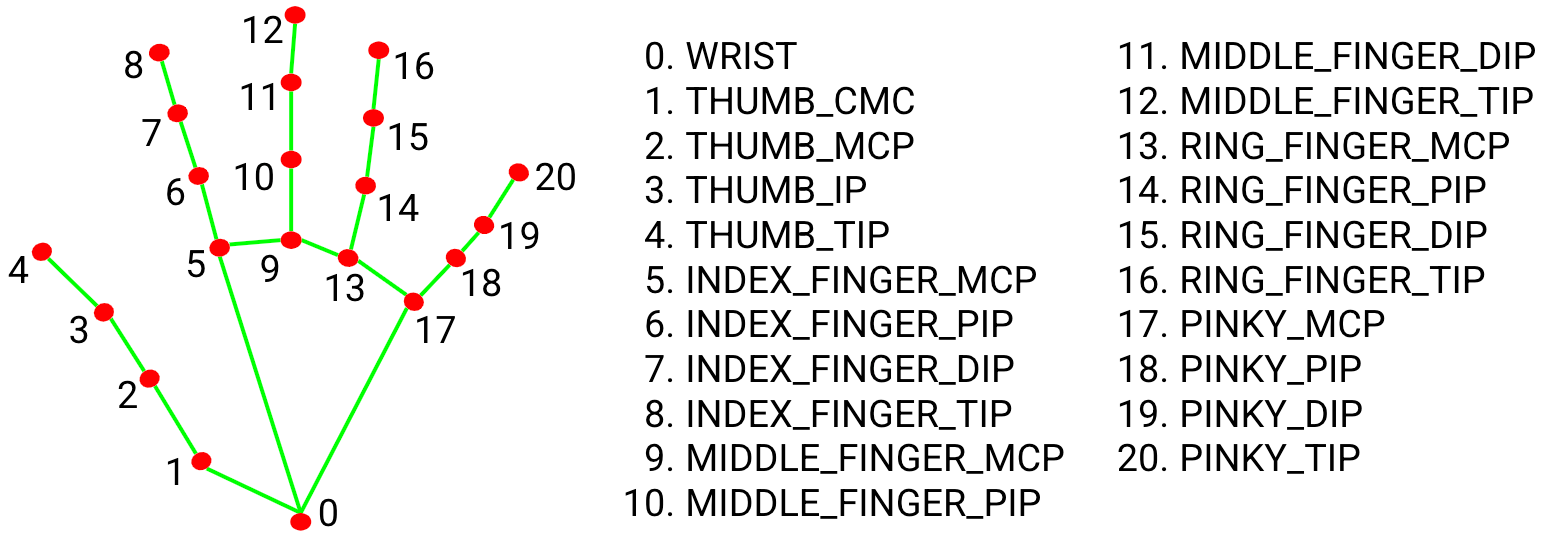

MediaPipe Hands is a high-fidelity hand and finger tracking solution. It uses machine learning (ML) to infer the 3D coordinates of 21 hands from a frame.

After palm detection on the entire image, the 21 3D hand joint coordinates in the detected hand area are accurately positioned by regression according to the hand marking model, that is, direct coordinate prediction. The model learns a consistent internal hand pose representation that is robust even to partially visible hands and self-occlusion.

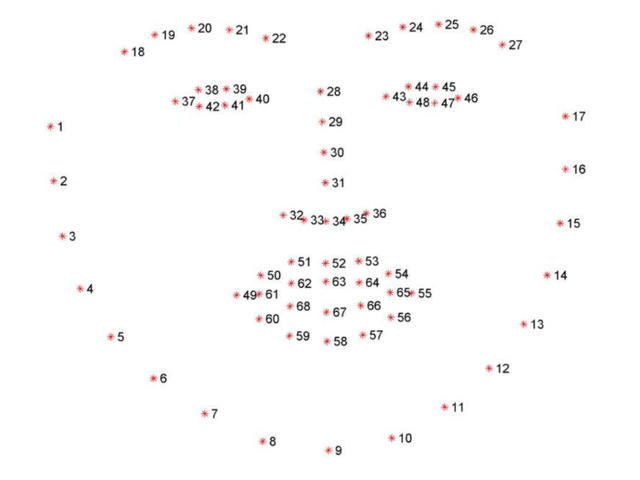

In order to obtain ground truth data, about 30K real-world images were manually annotated with 21 3D coordinates, as shown below (get the Z value from the image depth map, if there is a Z value for each corresponding coordinate). To better cover possible hand poses and provide additional supervision over the nature of the hand geometry, high-quality synthetic hand models in various backgrounds are also drawn and mapped to corresponding 3D coordinates.

10.4, MediaPipe Pose

MediaPipe Pose is an ML solution for high-fidelity body pose tracking that leverages BlazePose research to infer 33 3D coordinates and full-body background segmentation masks from RGB video frames, which also powers the ML Kit pose detection API.

The landmark model in MediaPipe poses predicts the location of 33 pose coordinates (see image below).

![]()

10.5, dlib

The corresponding case is facial special effects.

DLIB is a modern C++ toolkit containing machine learning algorithms and tools for creating complex software in C++ to solve real-world problems. It is widely used by industry and academia in fields such as robotics, embedded devices, mobile phones, and large-scale high-performance computing environments.

The dlib library uses 68 points to mark important parts of the face, such as 18-22 points marking the right eyebrow, and 51-68 points marking the mouth. Use the get_frontal_face_detector module of the dlib library to detect faces, and use shape_predictor_68_face_landmarks.dat feature data to predict face feature values.